It's becoming more and more apparent that Siri continually drops the ball when it comes to lock screen security. In the past, Siri was exploited in iOS 7.0.2 to send messages without needing a passcode. Then in iOS 7.1.1, Siri was use to bypass the lock screen again to access contacts, make calls, and send emails and texts.

The method may have changed, but the unfortunate truth is that Siri is still penetrable in Apple's latest mobile operating system, iOS 8.0.2 (and even the iOS 8.1 beta). If you use Siri on your iPhone running iOS 7.0 and higher, read below to see how this exploit works, and how you can protect yourself.

What This Bypass Can Do

Requiring nothing more than a SIM eject tool, or any other small-pointed object that can eject the SIM tray, strangers will be able to access your emails and also make unauthorized Twitter posts on your account's behalf.

This Siri bypass will not unlock your iPhone, but serious damage can still be done with access to an email that may hold sensitive information. Worst of all, this vulnerability appears on all iOS devices running 7.0 and higher with an activated SIM card.

How It Works

Wi-Fi must first be disabled on the device either from the Control Center. If the Control Center is disabled on the person's lock screen, the Siri voice command "Disable Wi-Fi" can be used. Then, you can speak one of the following commands on their lock screen:

- "Siri, read me all my messages"

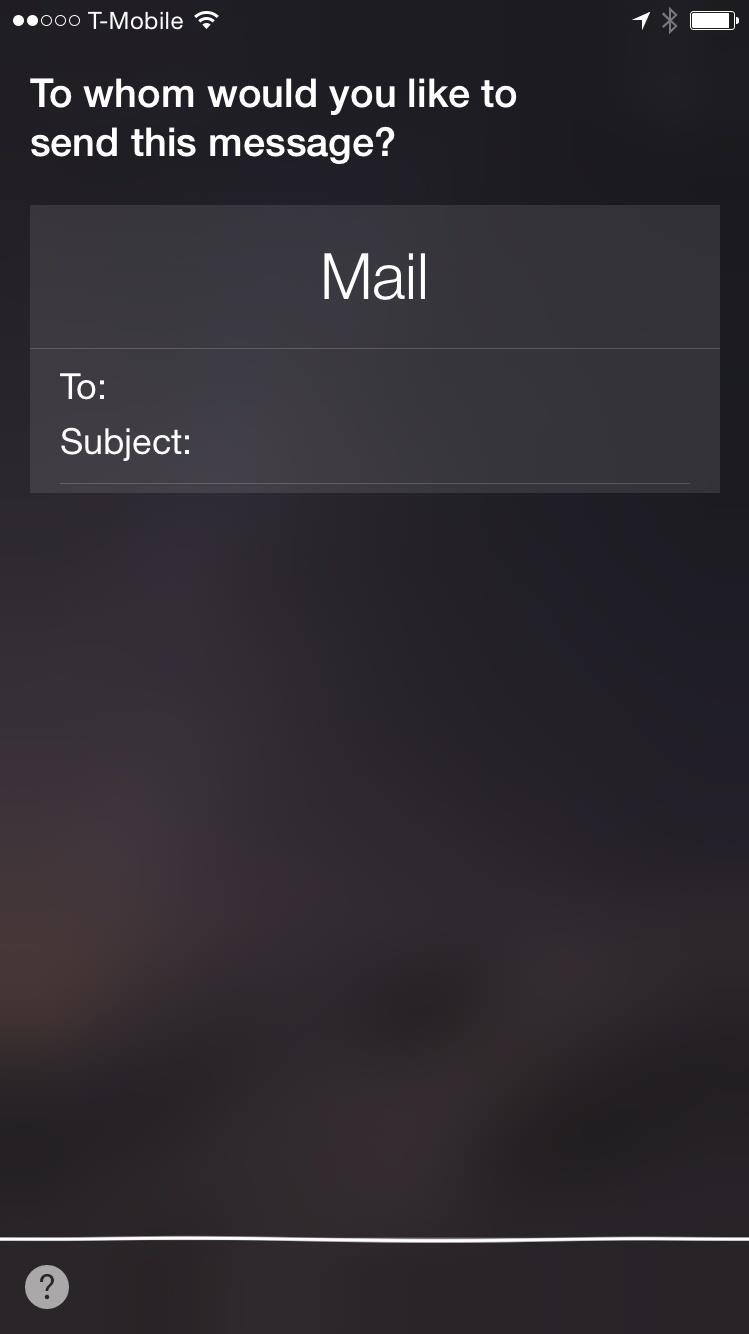

- "Siri, send an email"

- "Siri, show me my emails"

- "Siri, read me my latest email"

- "Siri, post to Twitter"

Siri will then ask the you for their passcode. Just select Cancel and pop out the SIM tray using the eject tool, pop the SIM back in, and as the device reconnects to the carrier, select to Edit the request spoken. After slightly modifying the text (ex. "Siri, show me my emails" to "Siri, show me emails"), tap Done once the device reconnects to the carrier.

Take a look at the video below provided by Everything ApplePro to see exactly how this exploit works.

How to Protect Yourself

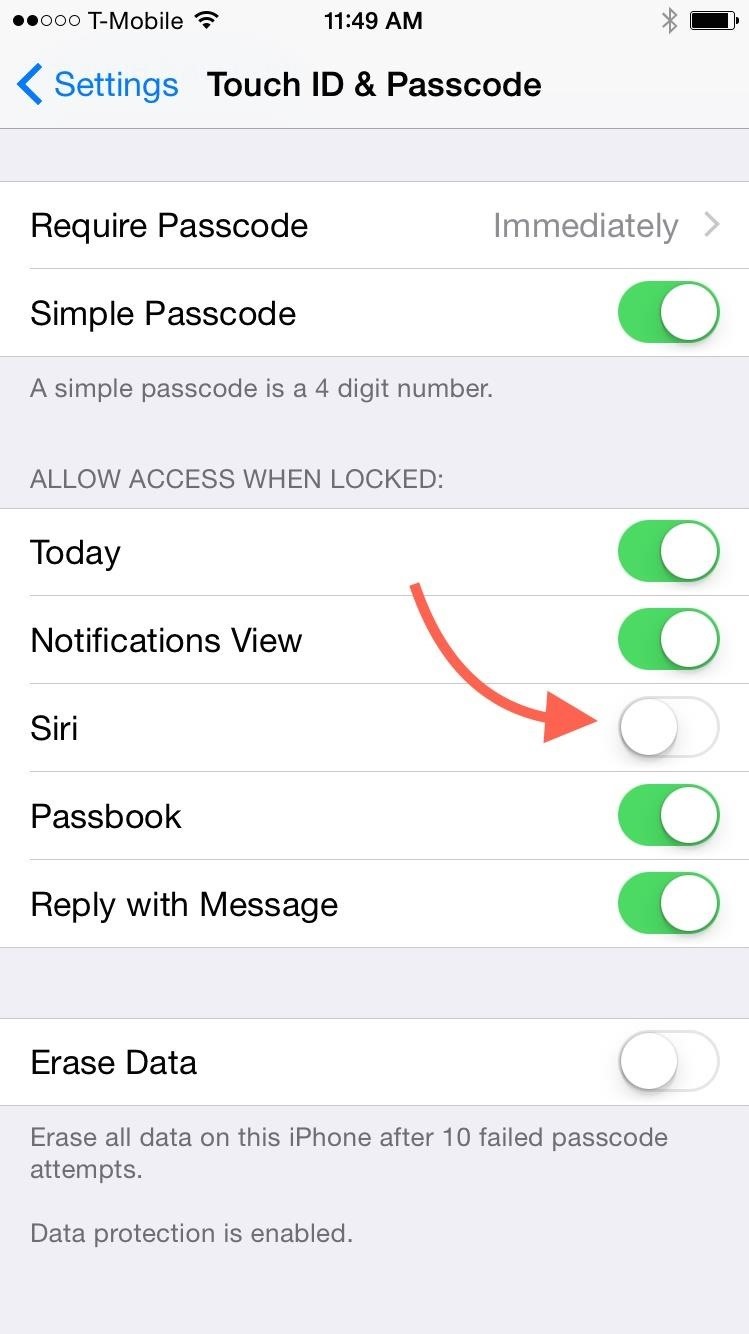

The solution is simple, but disappointing if you regularly utilize Siri from your lock screen. You will need to disable Siri from the lock screen by navigating to Settings -> Touch ID & Passcode and toggling Siri off.

With more and more users being made aware of such a serious exploit, we can only hope that Apple will patch this up before the official iOS 8.1 release, or at least an update immediately after.

Share your thoughts on the latest in an unsettling stream of lock screen exploits in the comments below, as well as on Facebook and Twitter.

Just updated your iPhone? You'll find new emoji, enhanced security, podcast transcripts, Apple Cash virtual numbers, and other useful features. There are even new additions hidden within Safari. Find out what's new and changed on your iPhone with the iOS 17.4 update.

Be the First to Comment

Share Your Thoughts