Hello folks, in this tutorial I will be showing you how to use a very simple command line tool for linux called MCD's Colloide to find files, directories, admin pages and database back ups hidden inside websites. This tool is meant to find admin login pages but allows you to use your own dictionary / wordlist with possible filenames or directories. Here, I'll show you how some commands to find admin login pages of vulnerable websites. You can clone Colloide from github here https://github.com/MichaelDim02/colloide

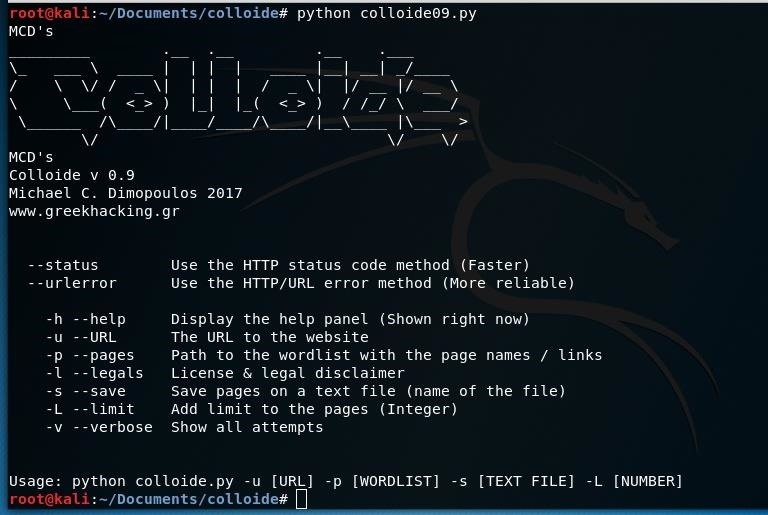

After downloading it on your linux machine you run the tool by typing python colloide09.py. This is what you are expected to see:

As you can see there are two methods that the tool can use in order to spot working pages or files. One is status and can be used by typing --status and it detects if the page shows a 4xx status code such as 404 (Not found), the other method is urlerror and detects whether the page gives an error or not. The status method is a lot quicker, yet less reliable than the url error method.

To set the website you want to scan you type -u <websiteurl> ex: -u www.google.com (no protocol)

To set the wordlist you want to type -p <wordlistpath> ex -p links.txt or -p specificlinks/phplinks.txt

MCD's colloide comes with many dictionaries / wordlists for admin pages but you can use whatever you want. You can limit the found pages with -L <integer> ex: -L 10 and you can also have a report of the working pages by typing -s <txtfile> ex: -s workingpages. Good luck! Here are some possible commands:

python colloide09.py --status -u www.example.net -p links.txt -L 3

python colloide09.py --urlerror -u www.example.com -p ../../Desktop/mywordlist.txt -s reportfile

python colloide09.py --urlerror -u www.example.us -p specificlinks/asplinks.txt

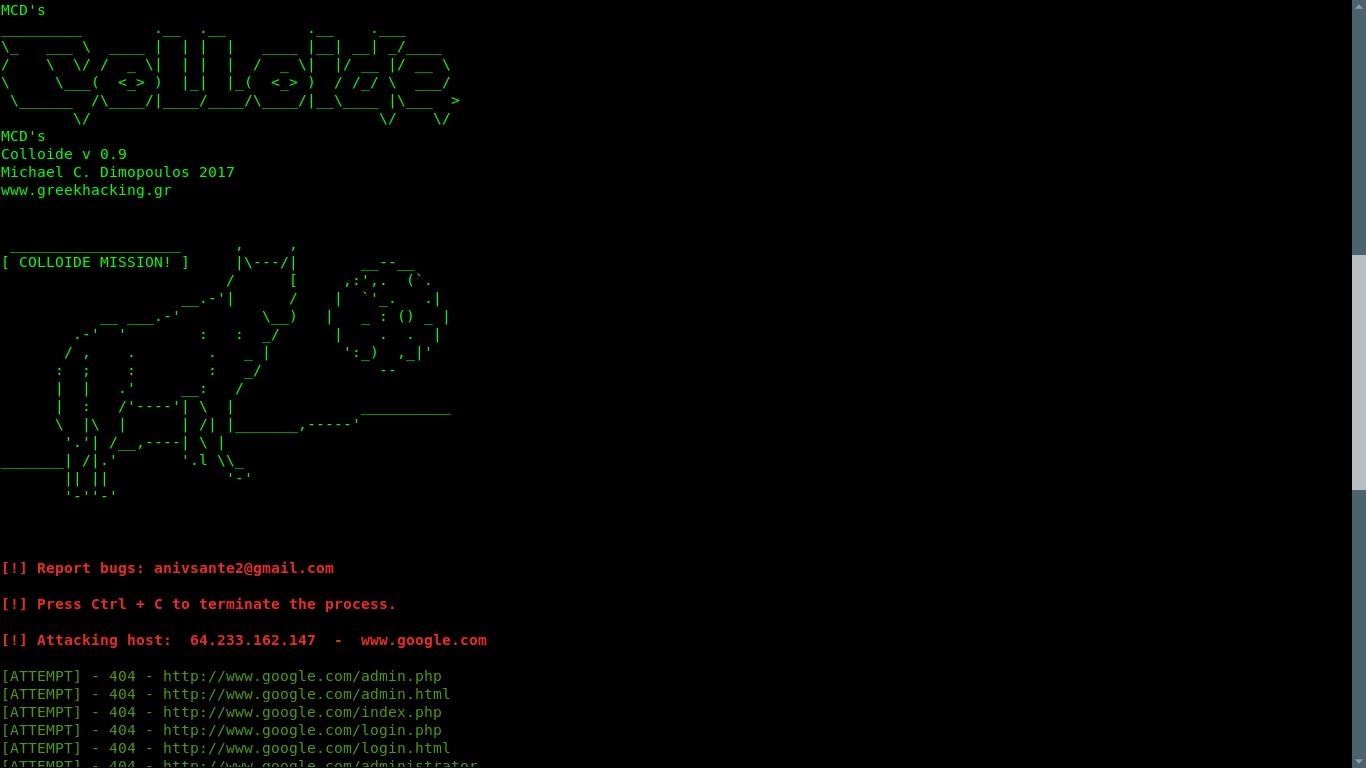

Here are some illustrations (not by me)

Note: This is not the program being used. It is a clone that prints ATTEMPT .... just for the sake of the illustrations

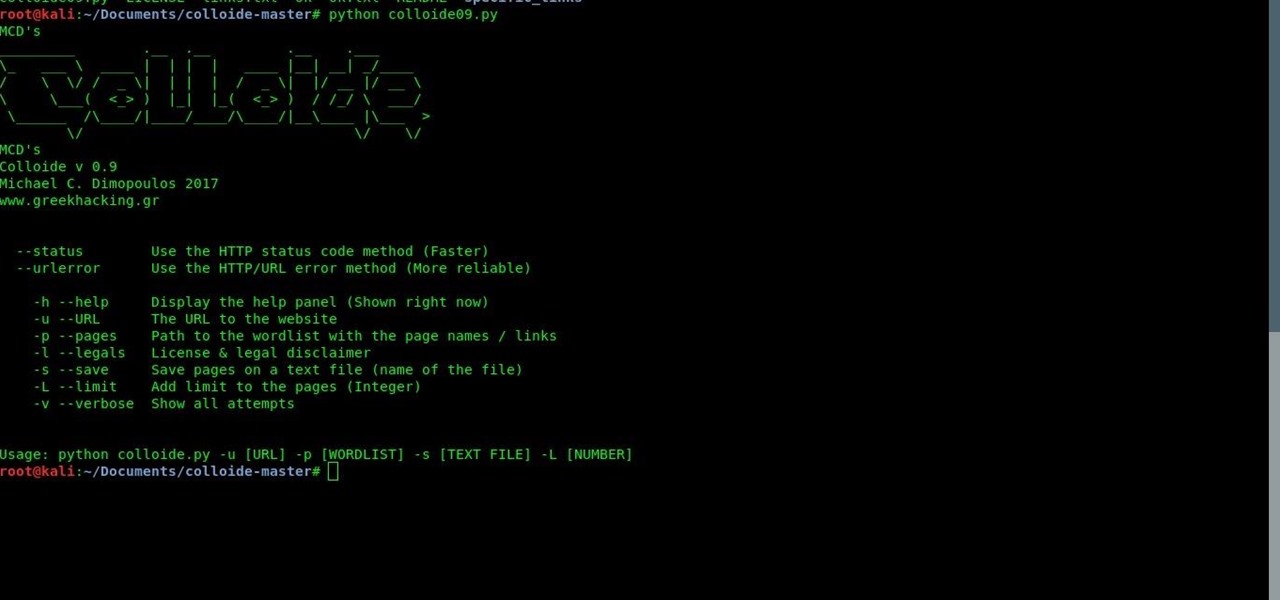

Same with the screenshot here.

Be the First to Respond

Share Your Thoughts